Natural Language Processing (NLP) Interview Questions

Download NLP Interview Questions PDF

Download NLP Interview Questions PDFBelow are the list of Best NLP Interview Questions and Answers

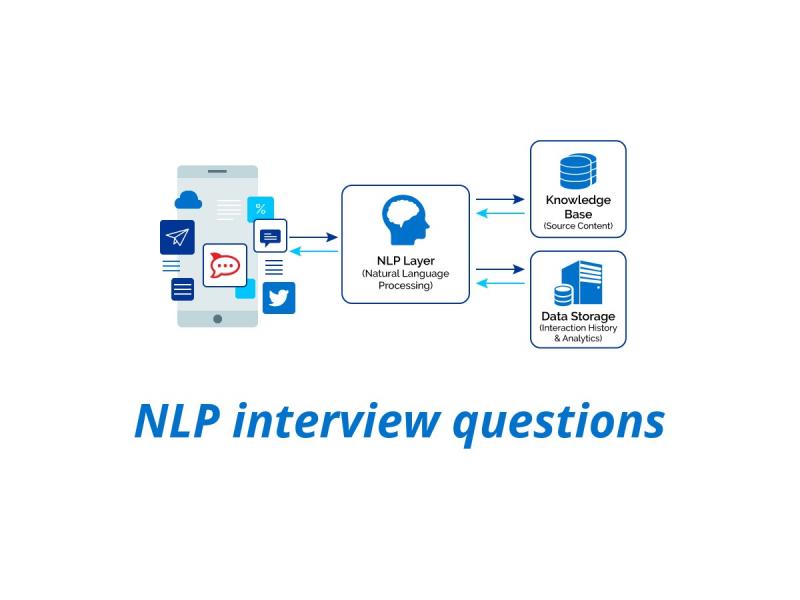

Natural Language Processing or NLP is an automated way to understand or analyze the natural languages and extract required information from such data by applying machine learning Algorithms.

Below are the few major components of NLP.

- Entity extraction: It involves segmenting a sentence to identify and extract entities, such as a person (real or fictional), organization, geographies, events, etc.

- Syntactic analysis: It refers to the proper ordering of words.

- Pragmatic analysis: Pragmatic Analysis is part of the process of extracting information from text.

Natural Language Processing can be used for

- Semantic Analysis

- Automatic summarization

- Text classification

- Question Answering

Some real-life example of NLP is IOS Siri, the Google assistant, Amazon echo.

NLP Terminology is based on the following factors:

- Weights and Vectors: TF-IDF, length(TF-IDF, doc), Word Vectors, Google Word Vectors

- Text Structure: Part-Of-Speech Tagging, Head of sentence, Named entities

- Sentiment Analysis: Sentiment Dictionary, Sentiment Entities, Sentiment Features

- Text Classification: Supervised Learning, Train Set, Dev(=Validation) Set, Test Set, Text Features, LDA.

- Machine Reading: Entity Extraction, Entity Linking,dbpedia, FRED (lib) / Pikes

tf–idf or TFIDF stands for term frequency–inverse document frequency. In information retrieval TFIDF is is a numerical statistic that is intended to reflect how important a word is to a document in a collection or in the collection of a set.

According to The Stanford Natural Language Processing Group :

A Part-Of-Speech Tagger (POS Tagger) is a piece of software that reads text in some language and assigns parts of speech to each word (and other token), such as noun, verb, adjective, etc.

PoS taggers use an algorithm to label terms in text bodies. These taggers make more complex categories than those defined as basic PoS, with tags such as “noun-plural” or even more complex labels. Part-of-speech categorization is taught to school-age children in English grammar, where children perform basic PoS tagging as part of their education.

Lemmatization generally means to do the things properly with the use of vocabulary and morphological analysis of words. In this process, the endings of the words are removed to return the base word, which is also known as Lemma.

Example: boy’s = boy, cars= car, colors= color.

So, the main attempt of Lemmatization as well as of stemming is to identify and return the root words of the sentence to explore various additional information.

Natural Language Processing aims to program computers to process large amounts of natural language data. Tokenization in NLP means the method of dividing the text into various tokens. You can think of a token in the form of the word. Just like a word forms into a sentence. It is an important step in NLP to slit the text into minimal units.

Latent Semantic Indexing (LSI) also called Latent semantic analysis is a mathematical method that was developed so that the accuracy of retrieving information can be improved. It helps in finding out the hidden(latent) relationship between the words(semantics) by producing a set of various concepts related to the terms of a sentence to improve the information understanding. The technique used for the purpose is called Singular value decomposition. It is generally useful for working on small sets of static documents.

Regular expression is a sequence of characters that define a search pattern, mainly for use in pattern matching with strings, or string matching. It includes the following elements:

Example: A and B are regular expressions then

- The regular expression is A. B (concatenation)

- The regular expression (alternation) is A l B

- The regular expression (Kleene Star) is A*

Regular Grammars

There are 4 tuples in Regular Grammars (N, ∑, P, S € N). In this formula, N stands for the non-terminals’ sets, ∑ means the set of terminals, P is the set of productions to change the start symbol, P has its productions from one of the types and lastly S is the start non-terminal.

Named-entity recognition (NER) is the method of extracting information. It arranges and classifies named entity in the unstructured text in different categories like locations, time expressions, organizations, percentages, and monetary values. NER allows the users to properly understand the subject of the text.

Difference between NLP and NLU are

| Natural Language Processing | Natural Language Understanding |

| NLP is the system that works simultaneously to manage end-to-end conversations between computers and humans. | NLU helps to solve the complicated challenges of Artificial Intelligence. |

| NLP is related to both humans and machines. | NLU allows converting the unstructured inputs into structured text for easy understanding by the machines. |

The difference between NLP and CI(Conversational Interfaces) are as follows -

| Natural Language Processing | Conversational Interfaces |

| NLP is a kind of artificial intelligence technology that allows identifying, understanding and interpreting the request of users in the form of language. | CI is a user interface that mixes voice, chat and another natural language with images, videos or buttons. |

| NLP aims to make users understand a particular concept. | A conversational Interface provides only what the users need and not more than that. |

Differences between AI, Machine Learning, and NLP

| Artificial Intelligence | Machine Learning | Natural Language Processing |

| It is the technique to create smarter machines | Machine Learning is the term used for systems that learn from experience. | This is the set of system that has the ability to understand the language |

| AI includes human intervention | Machine Learning purely involves the working of computers and no human intervention. | NLP links both computer and human languages. |

| Artificial intelligence is a broader concept than Machine Learning | ML is a narrow concept and is a subset of AI. |

Masked language modelling is the process in which the output is taken from the corrupted input. This model helps the learners to master the deep representations in downstream tasks. You can predict a word from the other words of the sentence using this model.

Pragmatic Analysis: It deals with outside word knowledge, which means knowledge that is external to the documents and/or queries. Pragmatics analysis that focuses on what was described is reinterpreted by what it actually meant, deriving the various aspects of language that require real-world knowledge.

Dependency Parsing is also known as Syntactic Parsing. It is the task of recognizing a sentence and assigning a syntactic structure to it. The most widely used syntactic structure is the parse tree which can be generated using some parsing algorithms. These parse trees are useful in various applications like grammar checking or more importantly it plays a critical role in the semantic analysis stage.

Pragmatic Ambiguity can be defined as words that have multiple interpretations. Pragmatic Ambiguity arises when the meaning of words in a sentence is not specific; it concludes with different meanings. There are various sentences in which the proper sense is not understood due to the grammar formation of the sentence; this multi-interpretation of the sentence gives rise to ambiguity.

For Example- "do you want a cup of coffee", the given word is either an informative question or a formal offer to make a cup of coffee.

The word "perplexed" means "puzzled" or "confused", thus Perplexity in general means the inability to tackle something complicated and a problem that is not specified. Therefore, Perplexity in NLP is a way to determine the extent of uncertainty in predicting some text.

In NLP, perplexity is a way of evaluating language models. Perplexity can be high and low; Low perplexity is ethical because the inability to deal with any complicated problem is less while high perplexity is terrible because the failure to deal with a complicated is high.

N-gram in NLP is simply a sequence of n words, and we also conclude the sentences which appeared more frequently, for example, let us consider the progression of these three words:

- New York (2 gram)

- The Golden Compass (3 gram)

- She was there in the hotel (4 gram)

Now from the above sequence, we can easily conclude that sentence (a) appeared more frequently than the other two sentences, and the last sentence(c) is not seen that often. Now if we assign probability in the occurrence of an n-gram, then it will be advantageous. It would help in making next-word predictions and in spelling error corrections.

The meta-model in neuro-linguistic programming is a set of questions designed to specify information, challenge and expand the limits to a person's model of the world. It responds to the distortions, generalizations, and deletions in the speaker's language.

NLP Milton Model is a set of language patterns used to help people to make desirable changes and solve difficult problems. It is also useful for inducing trance or an altered state of consciousness to access our all powerful unconscious resources.

Also Read Related NLP Interview Questions | ||

|---|---|---|

| Artificial Intelligence Interview Questions | Basic Machine Learning Interview Questions | |

Latest Interview Questions-

Silverlight Interview Questions

-

Entity framework interview questions

-

LINQ Interview Questions

-

MVC Interview Questions

-

ADO.Net Interview Questions

-

VB.Net Interview Questions

-

Microservices Interview Questions

-

Power Bi Interview Questions

-

Core Java Interview Questions

-

Kotlin Interview Questions

-

JavaScript Interview Questions

-

Java collections Interview Questions

-

Automation Testing Interview Questions

-

Vue.js Interview Questions

-

Web Designing Interview Questions

-

PPC Interview Questions

-

Python Interview Questions

-

Objective C Interview Questions

-

Swift Interview Questions

-

Android Interview Questions

-

IOS Interview Questions

-

UI5 interview questions

-

Raspberry Pi Interview Questions

-

IoT Interview Questions

-

HTML Interview Questions

-

Tailwind CSS Interview Questions

-

Flutter Interview Questions

-

IONIC Framework Interview Questions

-

Solidity Interview Questions

-

React Js Interview Questions

Subscribe Our NewsLetter

Never Miss an Articles from us.